How to decrease the latency between the clients and your servers

Discover how to decrease application latency with three key strategies to bring your client and server closer.

In the last newsletter, I reviewed latency and why network and geographical proximity are crucial, from how long it takes to pass one packet from source to target to the number of roundtrips required to pass the whole data.

The first way to tackle the issue is to bring the client and server closer so the packets/requests will reach their destination faster. There are a couple of ways to implement it:

Manually deploy servers close to your clients

If all your clients are in Germany, deploying your system in Germany is almost a no-brainer. Data centers exist in most European countries and all major regions of the US. India, Japan, Singapore, New Zealand, Australia, and South Africa also have a couple of data centers.

So if you are serving only one type of geo-location, choose the data center that is the closest, which also matches your budget, and where the required infrastructure is available (for example, Amazon Redshift is not available in Canada West 3)

But your clients are in multiple regions, so what are the options to make everything faster?

The Naive solution - Sharding per region

Each region has its infrastructure, and DNS directs the client to the closest site. You copy all the infrastructure to each region: Application Servers, Databases, Storage, etc.

Pros:

This is a “naive” way to support more geolocations without changing anything in your architecture.

Cons:

Product-wise, clients must be directed to the original region where data was written when moving between regions. Otherwise, they won’t see their data and will experience higher latency to the original region.

Costs may be more expensive as you need to copy everything and cannot share resources.

Maintaining and monitoring are more complicated.

To get a clear view of all your users, you must pipeline the data from all sources into one data warehouse.

💡 For example, in a food delivery app, getting the list of restaurants in a specific region must be done quickly for local customers to convert their usage into ordering. On the other hand, most of them don’t need a low-latency response to see their order history when they are not at the location.

Replicating read operations

Another option is to have a “primary” region where all the write operations happen and serve in other regions only read operations by replicating your databases to these regions.

Pros:

Data is available everywhere

Read operations are fast

Cons:

Replication lags might occur, as small as big, and users in “read” regions won’t see up-to-date data. What makes the system “Eventual consistent.”

High Write latency to master region.

The master region will have to support large-scale write operations (Depending on your system)

💡 To have this architecture, your application should be stateless, to not hold data in the memory/filesystem on the node where the application code is running but in a “remote” data store.

Multi-primary architecture

In a multiple primary architecture, the data can be written in every region and replicated across the other regions. Whether the data must be written in all regions or not to be counted as “Written.” In other words, what is the consistency level?

Writing to one node/region is enough, and replication is done on one background.

Write to the majority of nodes/regions (3 out of 5, for example); the rest are replicated in the background.

Write to all node's needs/regions.

The consistency level will impact the latency of your write requests and data availability for read requests, meaning reads in different regions may not be consistent if your consistency level of write operation is low.

💡 A stateless application will make it easier to achieve a multi-primary architecture, as you can leverage data stores that have already solved the problem and are battle-tested, like Scylla-DB, Amazon Aurora, and Redis Enterprise. Discord, for example, gained a lot of value from this architecture using ScyllaDB.

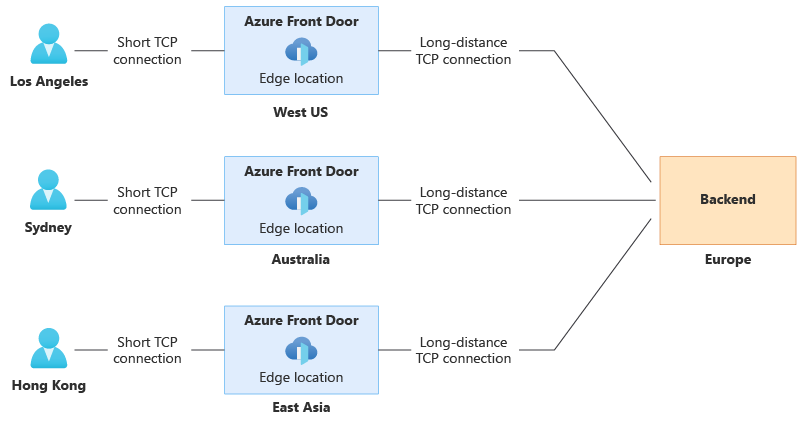

Split TCP

Another approach combined with the solutions above is “Split TCP.” With this technique, we add small “edge” servers that are close geographically to the clients. They will terminate the TCP handshake (and the TLS handshake for secure connections), meaning the connection will be established quickly. These edge servers maintain long-live connections with the application servers in one or multiple regions, like a connection pool for the application servers in each region.

The networking between the edge and application servers can be optimized in different ways so data will transfer faster. The client and servers are not varied in their systems/configuration/hardware as much like with your application clients:

Changing communication protocol from HTTP to HTTP2 or HTTP3/QUIC

Wider TCP initial congestion window

Connect over the internal network of the cloud infrastructure

Compressing the transferred data

Luckily, we don’t have to implement this by ourselves, and many cloud providers have implemented a solution for us:

Cloudflare

Amazon AWS CloudFront

Azure FrontDoor

(Picture taken from Azure Documentation)

Usually, the connection between the edge servers and the application servers is done over the public internet network. Those who need to optimize it can subscribe to the premium service of their cloud infrastructure, which will route the traffic within the cloud service private network. AWS/Azure/GCP and others have an “optimized network,” a private high throughput data link between their data centers with route optimization and less used by the traffic of everyone who watches Netflix, YouTube, TikTok, and other media streaming services around the globe (more on that in another post).

Content Delivery Networks

The above solutions and others are also implementing a CDN, Content Delivery Network, a caching solution for static media that can also cache dynamic content that is requested simultaneously or does not change much with time. They replicate the static data over their edge server, so your users never hit your servers.

Depending on your application, you can leverage CDN by “compiling” dynamic data into static pages and adding them to the CDN. For example, the hotel listing app can keep the hotel and room details pre-rendered in the CDN and load only the pricing & availability from the server after the page is loaded.

If you build a Web-based Single/Multi-page application, you can also leverage CDN by letting them cache your client-side code automatically, so all the frontend code will load faster, and your users will have a faster user experience.

To recap, I covered three major ways and their pros and cons to decrease network latency between your clients and your servers by bringing them closer using a global deployment strategy with different architectures, TCP splitting, and CDN.

With most systems, I would prioritize deploying the application in one region with the most users, then using CDN and Split-TCP to optimize access from other regions. If not enough, I would have to consider using multi-geolocation deployments with different data/server architectures (and maybe different microservices), as costs and system complexity are higher when going global.

There are also more ways to decrease the latency, but they do not involve geographic proximity, and I will cover them in the following posts.